The Overtake Underneath: What Bifurcated When Anthropic Passed OpenAI

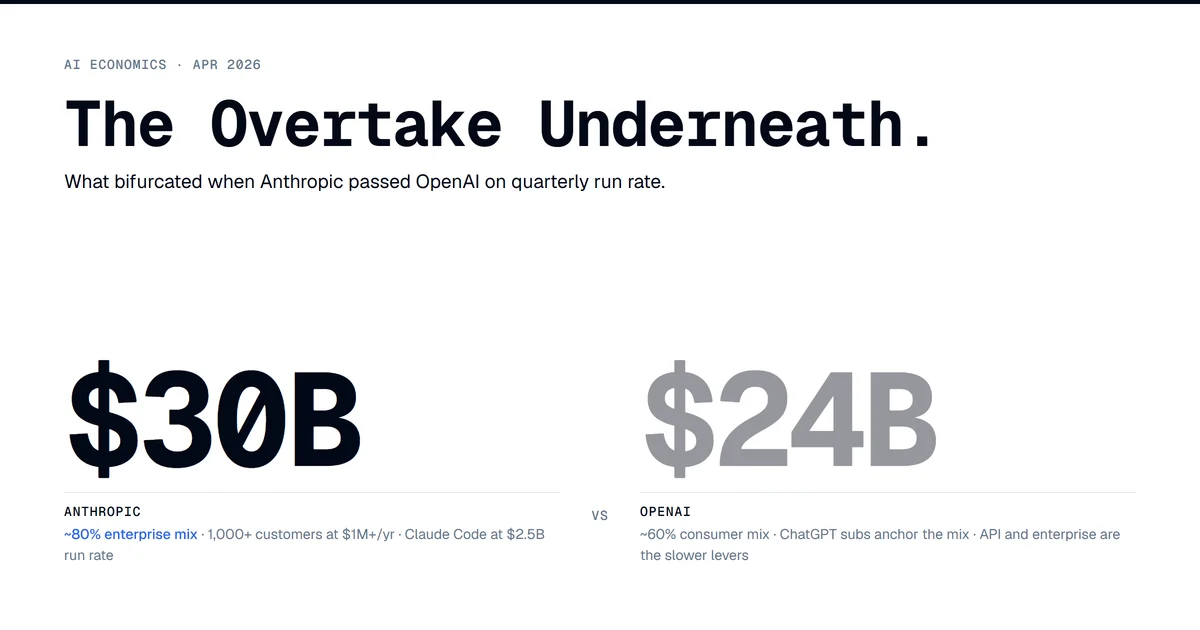

Sometime between mid-March and mid-April 2026, Anthropic's annualized run-rate revenue passed OpenAI's. Anthropic disclosed approximately $30B in ARR by early April. OpenAI sat at roughly $24B in the same window, confirmed at $2B/month. Press framing settled on Claude won enterprise. That framing is correct and undersells what actually happened.

The cleaner cut: the model layer bifurcated by buyer surface. ChatGPT keeps consumer (800M weekly actives, ~50M paying subs, an ad tier going live, a $100/mo Pro on top of $20/mo Plus). Claude takes agentic-enterprise spend (1,000+ companies at $1M+/yr committed, Claude Code at $2.5B+ ARR, ~80% of revenue from API plus enterprise commitments). Same model layer. Two completely different commercial surfaces underneath.

That bifurcation reframes Bain's $2T sector revenue target for 2030, and it forces a recommendation question on every builder paying for both APIs: which lab for which workload. The answer is no longer symmetric.

Two Revenue Mixes That Are Roughly Inverse Images

Anthropic's mix per Sacra's tracker and SaaStr's reporting: roughly 70-75% API (pay-per-token, including Bedrock and Vertex distribution), 10-15% subscriptions, with reserved enterprise capacity as a separate stream. About 80% of total revenue is enterprise/developer workloads. As of October 2025 the company reported 300,000+ business customers driving that 80%. By April 2026 the $1M+/yr tier crossed 1,000 companies, doubled from 500+ in February.

The growth shape is the part the headline numbers undersell. ARR moved $87M (Jan 2024) → $1B (Dec 2024) → $9B (end of 2025) → $14B (Feb 2026) → $19B (March) → $30B (April). The $14B-to-$30B jump in roughly eight weeks is what triggered the press cycle. Dario Amodei's framing was that the company's own forecasts had been outstripped by a factor of eight.

OpenAI's mix per Sacra's OpenAI page: roughly 60-65% from ChatGPT subscriptions (Plus, Pro, Team, Go), 15-25% API, 10-15% enterprise/Microsoft revenue share, and a new ads stream. Enterprise specifically crossed 40% of revenue and is on track for parity with consumer by year-end, driven by 9M+ paying business users as of February 2026.

Consumer scale: 800M weekly active users by October 2025, 900M by February 2026, ~50M paying subscribers. The $8/mo ChatGPT Go tier went global in January 2026, targeting 122M paying subs by year-end. Ads launched in beta on Feb 9, 2026 on Free and Go, projected at $2.4-2.5B in 2026 ad revenue.

Anthropic ~80% enterprise / ~20% consumer-and-prosumer. OpenAI ~60% consumer / ~40% enterprise. Same total-addressable-customer base on paper. Two different monetization surfaces in practice.

What "1,000+ at $1M+" Actually Looks Like

The $1M+/yr customer count is the operative metric. Two years ago that figure was a dozen. In February 2026 it was 500+. By April it was 1,000+. The doubling cadence flipped sell-side conviction in the eight weeks before the overtake.

Named customers tell the workflow story:

- Lyft runs Claude via Bedrock on its customer care assistant. Public Lyft + Anthropic disclosure: average resolution time down 87%, thousands of daily requests routed through Claude, with Anthropic engineers embedded to train Lyft's team on agentic patterns. This is an agent layer running in the live customer pipeline, not a chatbot demo.

- Pfizer uses Claude through Bedrock for drug-development document search across ~20,000 docs per project, saving ~16,000 search-hours/year per drug program with 55% infrastructure cost reduction.

- Snowflake signed a $200M, multi-year agreement to make Claude available across 12,600+ Snowflake customers. Distribution-as-procurement: Snowflake's enterprise contract becomes Anthropic's distribution channel.

- Uber reportedly burned its full 2026 AI budget on Claude Code and Cursor in four months.

- Notion ships Claude as a delegate-to-AI surface inside Custom Agents. Deloitte (470,000 employees), Cognizant (350,000 associates), GitHub, GitLab, Air India, and Maryland state government are the named institutional anchors per Sacra. Eight of the Fortune 10 are customers.

The pattern is consistent. Agentic deployments in customer-facing workflows (Lyft), regulated knowledge work (Pfizer), procurement-platform distribution (Snowflake), embedded-in-product agents (Notion), developer tools (Cursor and Claude Code). Bottom-up adoption that escalates to seven-figure procurement.

The Capital Bases Are Denominated Differently

OpenAI raised $122B at an $852B post-money in April led by SoftBank with Andreessen Horowitz, anchored by Amazon, Nvidia, SoftBank, and Microsoft. Anthropic is in talks for a $50B round at $900B on top of Google's $40B commitment ($10B at $350B + $30B on milestones + 5GW dedicated TPU) and Amazon's $5B + $100B AWS spend commitment.

Anthropic's capital is denominated more in compute commitments than dollars. That matches the company profile as a training-cost-disciplined enterprise-revenue operator. OpenAI's capital base is dollar-denominated and dependent on the SoftBank-led syndicate continuing to fund a projected $121B in compute spend in 2028 against $85B in losses that year. Anthropic's training spend peaks at approximately $30B, roughly one-quarter of OpenAI's projected number, with profitability targeted for 2028-2029.

The companion piece on the financing layer covered the broader migration: hyperscaler capex moving into ABS, CMBS, private credit, and JV vehicles at $250-300B in 2026 issuance per Morgan Stanley. The Anthropic capital structure fits inside that pattern. Compute-denominated commitments from cloud sponsors that also distribute the model. Vertical capital plus vertical demand.

Counterargument: OpenAI Disputes the Comparison

Worth handling directly because it has real merit. OpenAI's CRO disputed the $30B figure in an April 13 internal memo, single-sourced via Remio, arguing Anthropic's gross-revenue accounting overstates results by approximately $8B when you account for AWS and Google Cloud distribution cuts. Bedrock and Vertex split revenue with Anthropic. Whether the company books gross or net materially changes the headline. Under OpenAI's preferred net-revenue methodology, Anthropic's comparable figure would be ~$22B, still healthy but not above OpenAI's $24B.

Reasonable read: the overtake is real on growth velocity, where the eight-week doubling speaks for itself. The absolute crossover is sensitive to accounting convention and depends on which methodology you apply to which company. If your operative number is trajectory, the overtake stands. If your operative number is level on like-for-like net accounting, OpenAI's pushback lands and the crossover hasn't happened yet.

The other pressure on the thesis is that consumer monetization at OpenAI is an unpulled lever. The ad business is six weeks old. Targeting $2.4-2.5B in 2026 ad revenue with a path to ~$100B/yr by 2030 is the bull case, and 800M weekly users at any reasonable RPM produces a serious ad business. If that monetization path executes, the bifurcation looks more like a 2026 snapshot than a 2030 equilibrium. Counter to the counter: ad-supported AI inference is unproven economics. OpenAI's 33% gross margin and $14.1B projected 2026 inference cost say the marginal token cost of every ad-supported query is real and rising.

Why the Bain $2T Math Got Harder

Bain's September 2025 report modeled AI sector compute demand at 200 GW of incremental power by 2030, requiring approximately $500B in annual capex and ~$2T/yr in new revenue to be fundable on commercial economics. The model assumed every plausible offset (full IT-budget shift to cloud, full reinvestment of ~20% AI savings from sales, marketing, customer service, and R&D). The report still flagged an $800B/yr gap.

Anthropic at $30B plus OpenAI at $24B is $54B. That is 2.7% of the $2T target between the two leaders. Add Google's AI revenue contribution, Microsoft's AI segment, AWS's Bedrock margin, Meta's first-party ads attribution, and the rest of the field, and the sector lands somewhere in the low hundreds of billions of total annualized AI revenue. Getting to $2T by 2030 requires the leaders to compound at >40% CAGR through 2030 and the long tail of enterprise applications to materialize at scale.

The bifurcation makes this harder, not easier, in two ways. Consumer-side revenue is concentrated in one company and depends on AI ads clearing both inference cost and a search-RPM cannibalization risk that has no precedent. Enterprise-side revenue scales by procurement contract, and procurement is slow. The first 1,000 customers at $1M+ moved fast because the easy adopters always do. The next 1,000 takes longer.

This connects to the macro case I made on April 10 in the $650B AI capex disconnect: the spending was running ahead of measurable productivity, and the financing question was downstream of whether the revenue layer caught up. Q1 2026 hyperscaler earnings rolled the 2026 capex number to ~$725B. The revenue layer has more compounding to do.

What This Means for Builders

The bifurcation produces clean recommendations now that didn't exist a quarter ago.

Agentic enterprise workloads (customer service, document processing, regulated knowledge work, internal tooling): Claude is the default. Lyft's customer-care deployment, Pfizer's document search, Notion's delegated agents, the Cursor and Claude Code developer surface are the proof points. Pricing comparison: Claude Opus 4.7 at $5/$25 per 1M tokens vs GPT-5.5 at $5/$30 makes Claude marginally cheaper on output, the dominant cost line in agentic workloads.

Consumer-facing applications, search-substitute experiences, prosumer tools: ChatGPT is the default. 800M weekly users is the brand asset, $20/mo Plus with 71% six-month retention is a known-good monetization surface, and the API is mature.

Coding agents specifically: multi-model is the operative pattern. Cursor + Claude Code is the most common stack. The buyer surface tilts toward Claude, but the editor surface (Cursor, Windsurf) is model-agnostic and the right answer is to use both. If your team is choosing self-hosted vs API, the DeepSeek V4 procurement framework I wrote up still applies. Bifurcation at the lab layer doesn't change the build-vs-buy mechanics underneath.

The thing I keep going back to as someone running agent loops on personal hardware after work hours: I have been on Claude for agentic loops since Sonnet 3.5, and the only times I've reached for GPT have been consumer-flavored tasks where the brand habit was already there. That sample of one matches the revenue mix the leaders just printed. The same model layer is doing two different jobs for two different buyers, and the price tag for each is becoming separately legible.

The overtake is a data point inside that broader split. The harder question is whether both surfaces can keep compounding at the rate the financing layer has already priced in, with consumer revenue concentrated in one company and enterprise revenue gated by procurement cycles that move on quarters, not weeks.