M5 Max and Local LLMs: Why the Best AI Tools Will Never Be Open Sourced

There is a quiet shift happening in developer communities. If you track the most effective builders, you notice they stopped sharing their repositories. They are still building, but the public build logs dried up.

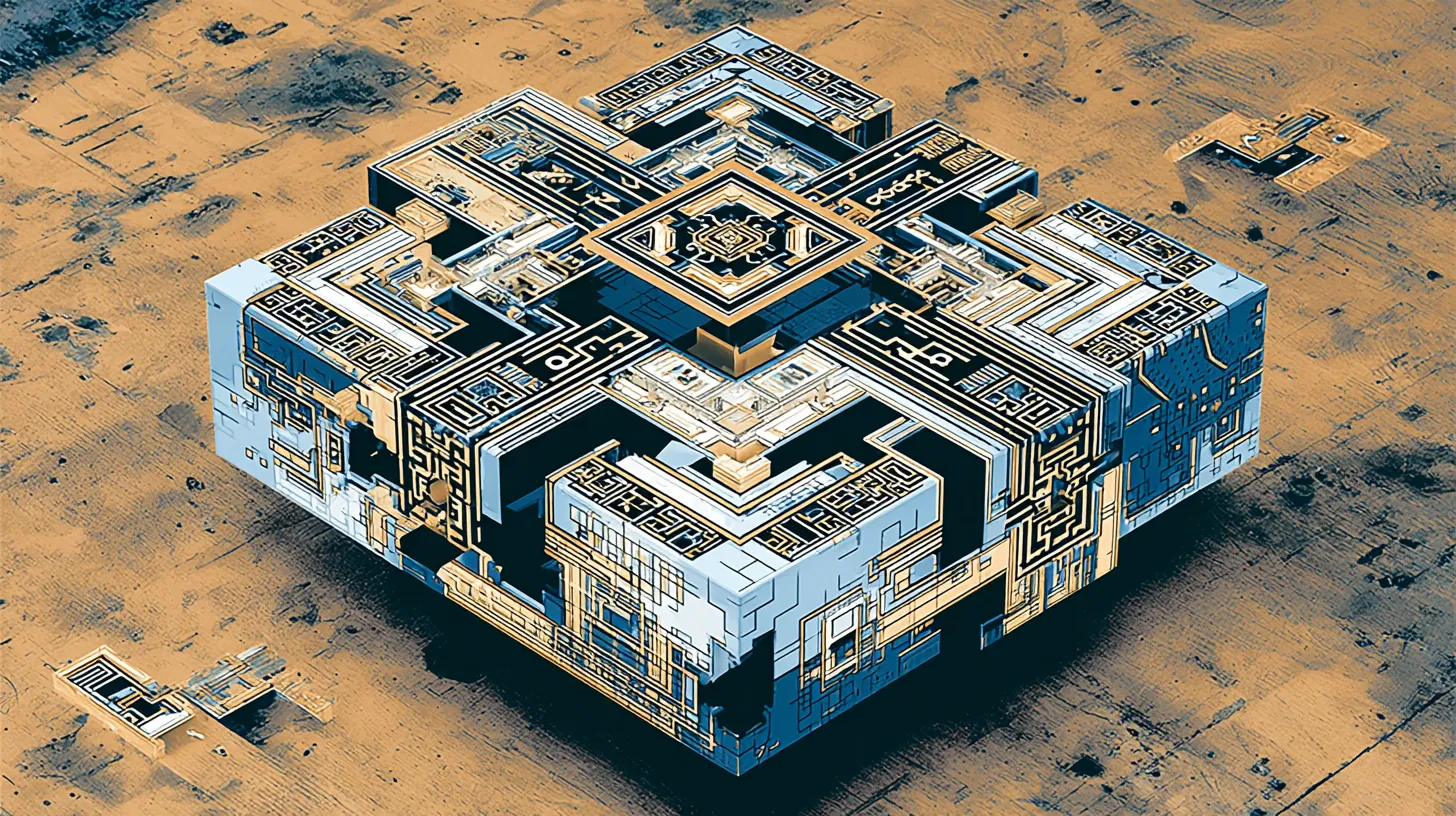

The arrival of the M5 Max chip allows laptops to run 120-billion parameter models entirely local. This hardware capability accelerates a move toward private, hyper-personalized AI automations. Instead of building general open-source projects for an audience, developers are creating illegible, unscalable tools designed exclusively for their own workflows.

The great silence is not a lack of innovation. It is an explosion of private leverage.

How M5 Max hardware enables local 120B models

The M5 Max chip enables server-grade inference on consumer laptops by providing enough unified memory and compute to run 120-billion parameter models entirely off battery power. Recent benchmarks on r/LocalLLaMA show these machines run models like Qwen3.5-122B-A10B-4bit at 65.8 tokens per second.

When you have this level of inference sitting in your backpack, you stop worrying about API costs and rate limits. You can pipe your entire digital life into a local model without sending a single packet over the network. Your emails, messy notes, financial statements, and client drafts become raw material for a system that never leaves your local disk.

This is the end of the API-first era for solo builders. If you can run the best models locally, the incentive to build a wrapper for the public disappears.

What are dark tools?

The most effective local automations are dark tools, which are highly specific scripts designed for an audience of one that are too brittle to ever be generalized. These tools provide massive leverage to the creator because they ignore the edge cases and UI requirements of a public product.

Take the 6:47 AM weather and email agent. One user built a local pipeline that triggers every morning. It pulls his specific calendar, checks the local surf report for his exact beach, and scans his priority inbox. It does not give a generic summary. It tells him exactly which board to grab or if he needs to be at his desk ten minutes early because a specific client in London emailed.

Another builder shared a receipt CSV parser. It is a brittle script that only works because he knows exactly how his bank exports PDFs. It maps to his specific accounting categories and pushes data into a private SQLite database.

These tools are illegible to anyone else. They use hardcoded file paths and lack error handling. Because they are built for a single user, they are ten times more effective than any polished SaaS product.

Why generalizing software kills its effectiveness

Generalizing a tool for a public audience introduces friction that kills the speed and utility of the original automation. Most makers are realizing that the work required to make a tool work for everyone else—like building settings menus or ensuring cross-platform compatibility—strips out the hyper-specific logic that made the tool useful in the first place.

We spent the last decade obsessed with scalable software. If you built a tool, the default path was to polish the UI and launch it to the public. When a user pointed out this exact dynamic on r/LocalLLaMA, the top response was a post titled I feel personally attacked. It hit 2,100 upvotes. The community knows it is true.

When you build exclusively for yourself, you skip the UI entirely. You stop adding rules and simply run Python scripts triggered by keyboard shortcuts. The resulting tool looks like a mess, but it fits your specific brain perfectly.

Leverage vs. support debt: The cost of public shipping

Turning a personal automation into a public product is often a poor trade because it replaces high-leverage building with low-leverage customer support. If a script saves you five hours a week, selling it for ten dollars a month often forces you to spend those five hours explaining configuration logic to strangers.

The real solopreneur secret is keeping the most powerful tools private. If you build a local ingestion engine that reads your specific project folders and drafts your weekly client updates, that script gives you five hours of your week back.

The math does not work for small-scale commercialization. The leverage is in the usage, not the sale.

Building for an audience of one

I still build Tuon and public Obsidian plugins like Tuon Deep Research, but my personal local stack is getting weirder and much less legible.

If you are getting your hands on M5 Max hardware, resist the urge to build another wrapper for the public. Build something incredibly specific, entirely local, and completely useless to everyone except you. That is where the real leverage hides.